@Fraizer :

Could you please repeat the question I should answer.

I’m sorry, I think I’ve lost track of what remained unanswered.

they are more of one haha ^^ no problem i copy past my post:

hi @Ethaniel

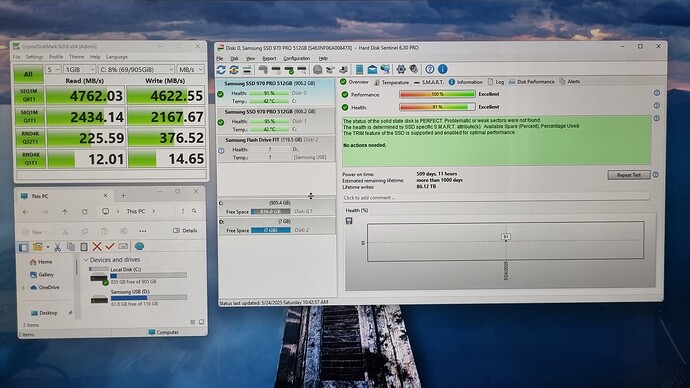

like you see on my first post is an bench non raid of my nvme on a websit. i did test before and was not that bad speed on the raid. the only thing is one of those 2 nvme i forget to wipe the data (ii used one of them just for an test but both are new).

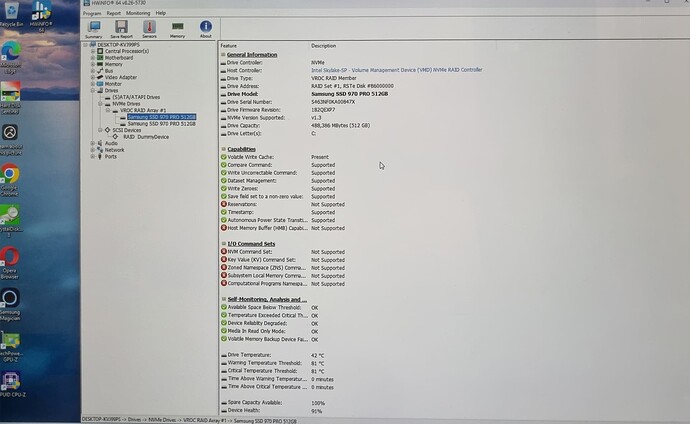

the vroc is hardware or an intel licence we can add ? i read like we have to pay 100$ this why i am thinking about an licence…

about the slot of my motherboard seriously i am lost because they a 4 slot nvme then 2 i use is the standard on the motherboard. the 2 others are in a assus dimm slot where i have to put my 2 nvme in sandwish and plug it to this asus slot.

what i read from someone (on the manual i am lost) what i am using actualy is line to chip set but the asus dimm slot seem is line to cpu… but this need to be confirmed…

Probably i am wrong to think that but the same person said if i use the asus dimm slot who is (what he said) i will reduce the performences of the video card (i plane when money to buy an evga 2080 ti ultra overcloked) between 1 to 3%… i understand by that the slot pci-e 1 (x16) is in direct line to the cpu…

This why i use those actual M2 slots… of course if the guy was right but it was mission impossible for me to check this informations…

here is the manual of my motherboard Z390:

https://www.asus.com/Motherboards/ROG-MA…elpDesk_Manual/

I dont know what you think about that…

since this time i found this link of asrock forum they have crazy performences… What you think about that ? i was trying to understand but was little hard especialy when the english is not my first language. they was talking about VROC too and some use 299 chip but not all of them with better speed than mine with less good NVMe drives… If understood well.

the link of this post on the asrok forum: (i even make an post but no answer)

http://forum.asrock.com/forum_posts.asp?..e-m2-raid-setup

thank you guys to be here to help me, is not realy my things those new things i dont change computer since many years and must learn all those new options and restrictions

cross fingers youl help me to solve this issue

@Fraizer :

I will try to answer those sentences that I believe are questions. If I miss something, please formulate your questions with question marks at the end of the sentences. That helps me find them more easily.

You need, motherboard, CPU and BIOS support. You also need hardware key (license) to use some (useful) features. See https://www.intel.com/content/www/us/en/…d-software.html

According to https://www.asus.com/Motherboards/ROG-MA…specifications/ the x16/x8 slot pair is connected to the CPU and the x4 and x1 slots are connected to the chipset.

The manual also designates slots for PEG (PCIe graphics) that are usually connected to the CPU. Note that the number of lanes (x16/x8) matches the specifications page.

The manual states what expansion slots are disabled or reduced when you use SATA and DIMM.2. That is because those are using the same PCIe lanes. Based on that DIMM.2 is connected to the CPU.

If you use DIMM.2, then you with only have x8 remaining for the graphics card. At most x16 PCIe lanes are connected to the CPU on this motherboard so you cannot have an x16 graphics card and NVMe connected to the CPU at the same time on this system.

Unfortunately the manual says nothing about the M.2 slots, and I haven’t found a layout schematics of the motherboard in the manual either. I would assume that those are wired to the chipset.

I does not seem to me that they tried VROC, they just found it in the menu.

Unless you have a lot of money to burn, I don’t recommend VROC because it seems to be a very new and expensive technology that has support for only a very limited number of SSD models.

I don’t know your specific use case, but my experience is that even one PCIe 3.0 x4 NVMe SSD is extremely fast.

I would not expect performance improvements for small files when using RAID, only for large files. NVMe RAID for performance might be a waste of money unless its benefits meet your specific needs.

If you want to figure out the best RAID configuration, my recommendation is to buy a cheap and small SATA SSD, install Windows on that, and then you can easily try out multiple configurations (different slots, different stripe sizes, etc.) without having to reinstall Windows.

If you have a lot of money to burn, you definitely should look into more expensive, but hopefully more performant server/enterprise grade hardware, but be sure to check the specifications to ensure that it really is faster.

@Ethaniel

thank you for those precise and perfect answers !

- if i understand well this asus dimm M2 2 slots are connected with the cpu (disable the sata this i dont mind because i have nothing on sata) but if i do that it will reduce the PCI-E of my graphics card from x16 to x8… If i understand well this is just not possible. i will not buy an expensive video card to have an x8… ![]()

- then if i am not wrong, for an gaming computer, 3d sofwares and normal use i will have better NVMe speed if i dont make an Raid 0… in this case i will plub just 1 samsung 970 Pro 1To. and will sell the other one…

I does not seem to me that they tried VROC, they just found it in the menu.

i dont know if you read all the 4 pages, but it look more of that. they have crazy speek 6000k 8000k etc… some are with and without vrok. But my english at this level (english + tech talking = too much to understand all) is limited to understand all.

on this topic this guy : “parsec” make alot of interesting tests and bios customisations. i fault is something interesting i can apply on my motherboard

i have no money to burn, i keep money since a long moment for an new computer. what i do is that : i buy the best of the best i can found and i will keep this computer for arround 6-7 years. of course if i dont have issue with the motherboard…

is the same about the settings i configure all as perfect is possible (fernando help me in past many times ^^ he is very nice and kind) and i touch nothing, just update my drivers and firmware / bios.

actualy i am thinking to sell back this motherboard and cpu or wait the x399 will be on the market to invest on it. the VROK look very interesting when i saw raid with 2 or 3 nme give gain arround 8000 or almos 10.000…

@Fraizer :

You conclusion is correct. If you are not willing to experiment with various RAID settings then returning the second NVMe drive is the best option in my opinion.

@Ethaniel

if i understand well: i want to try many different settings to get an better gain on speed on my actual nvme raid… If you have other things or settings to make me try please tell me. i would to try to the last try to solve this issue.

please can you answer to this:

EDIT by Fernando: Quoted post reformatted (to make the source clearer)

@Fraizer :

I could find only one post that might refer to VROC actually being used. A friend of Brighttail is reported to have used RAID on CPU lanes. That indeed sounds like VROC and parsec is referring to it s VROC. Unfortunately however very little details were given. I believe that no other benchmarks involve VROC in that thread, they are just referring to it as an interesting technology to explore.

Most of parsec’s recommendations are about using different stripe sizes that were recommended in this thread too, but you did not seem to be interested in experimenting with different stripe sizes.

@Ethaniel i choose 64ko regarding what he said.

my english is not so good but i dont think the bench on this 4 pages post was about test of different strip sizes right ? we can see on page 2 bench about 6k speed when i have 3 other 9K

i faut before that they are using the vrok you talked about. this why i ask you as an expert what you think abouthere post ![]()

@Fraizer :

Correct, the primary goal of that thread is creating a RAID array using 3 NVMe SSDs on an X299 chipset board. Most of the posts revolve around that topic but they are talking about performance and VROC too.

For optimal performance, stripe size should be the same as the native block size of the drive. Unfortunately it is not usually documented, so the best way of figuring it out is by trying different options. Because each model has different characteristics, benchmarks with other models do not reflect what you should expect with your model.

With only trying one stripe size, you might get lucky, but that does not seem to be the case for you. The best way of finding the optimal settings for your specific system (including all the various hardware and software components) is by trying and benchmarking multiple settings and comparing the results.

i fault parsec say it was optimal for nvme like an post of fernando to use an stripe of 64 or 128.

if i can have there speed i will test different strips of course even must reinstall totaly windows 10.

what strip sizes you recommande me to test ? starting by ?

i can expect there crazy speed ? 6k 9k ? is not because they use an x299 chipset ? who have many line connected directly with the cpu and dont affecting the other devices ?

thank you Ethaniel

@Fraizer :

parsec only made recommendations on what to try because each model is different.

Ideally you should first test only one SSD with not RAID. Then test all the available stripe sizes in RAID separately. This is the only way to find the optimal configuration.

That’s why I recommended you to get a cheap SATA SSD and install Windows on it, so that you don’t have to reinstall Windows this many times. Once you find the optimal configuration, you can install Windows on the NVMe SSDs.

Unfortunately I don’t know whether you will find any RAID configuration that has better performance than a single SSD. You should not expect “crazy speed” because RAID 0 only improves reads/writes on large files.

I believe that RAID will not work if you connect the NVMe SSDs to the CPU because the chipset provides the RAID support.

X299 with a Xeon CPU probably has better performance. In any case, random small operations are not expected to improve much, only large sequential operations are expected to be improved, only up to 2x speed.

@Ethaniel

do you saw the last post of this page where he do 6925 with 2x 960 evo on a chipset Z270 ?

http://forum.asrock.com/forum_posts.asp?..e-m2-raid-setup

can we expect something arround that when we choose the right strip ?

@Fraizer :

Unfortunately I don’t know what to expect exactly. But it will not be more than 2x of one drive. I believe that you have not posted such a benchmark.

4K reads/writes are expected to be slower than non-RAID.

If you are using it for OS and gaming, you most likely will see no real performance improvement, even if some benchmark metrics are going to be better.

@Ethaniel

i am trying to have some free time from my work to make the tests… but hard actualy.

Ethaniel in case i will dont use raid but just runing normaly only one NVMe drive can you please tell me if i need to install the last RST drivers ? and Update my RST OROM ?

i read on different websits is performences gain to install the RST drivers even we dont have an raid… hmm is true ? if yes i will need to update my orom ?

@Fraizer :

Although you asked Ethaniel, I will give the answers:

After having deleted your currently existing NVMe RAID array from within the Intel RST RAID Utility (don’t forget to store your important data ouside the RAID array, before you do that), your NVMe SSDs will neither need nor use any Intel RST driver or Intel RST RAID BIOS module. Consequences:

1. The Samsung NVMe Controllers of your non-RAIDed NVMe SSDs can either use the MS in-box NVMe driver or the specific NVMe drivers (currently best choice: v3.0.0.1802).

2. A BIOS update regarding the Intel RST RAID modules is neither required nor useful.

@Fraizer ,

Have you checked out what your link speed is in HWinfo? I presume your running the latest Uefi Raid roms being a Z390. I would uninstall the intel rst package and run your benches Bare Metal without the overhead of the raid rst software. Also run Anvil benchmarks with just one drive as Fernando is so very fond of that performance suite. After you have a baseline bench then raid them with different rst packages. My feeling is that the overhead of the chipset raid software is setting you back plus its a new chipset and who knows there could be bios bugs holding you back. For one make sure your running latest bios version or even try last stable bios version for comparison.