Hello World, Hello Fernando!

I need some advice getting a brand new small server to run as I planned to. The plan is to use the Intel “software” RAID driver solution on four SSDs. For this I need some advice and infos on the Intel drivers availible and how/with what components they work.

I am not a complete noob on this since I set up a pretty similar solution on a server with some older components.

What I have:

Brandnew Supermicro X11SCA-F board - that is a socket 1151v2 board using the Intel C246 chipset (that should be somewhat the same chip as an H370 or Z390 chipset but for Xeon CPUs).

Currently an i3-8100 (which is also supported by that board since it also has ECC memory support) that will be later upgraded to a Xeon E-2146G or 2176G when they finally are availible to buy for retail customers.

Two Samsung SM883 SATA SSD drives and two Intel DC P4510 NVMe SSDs.

Windows Server 2016 Essentials (Build 1703)

What I want:

Raid1 Mode on those Samsung SSDs an use them as the OS partition

Raid1 Mode on the two Intel DC NVMe drives to use them as the data partition (programs and database)

What I already did:

Switched the SATA controller to RAID in the BIOS - got the RSTe RAID option (UEFI module I guess) after I rebooted into the BIOS - created the RAID 1 array on the two Samsung SSDs and installed Server 2016 in UEFI mode to that array.

That worked out great. Windows used the built in drivers that are 13.2.0.something for the Intel SATA Raid and the “standard Microsoft NVMe” drivers that also detected both the Intel NVMe SSDs. Oh by the way these are in the 2.5 inch format and use the U.2 interface, but I didn’t use the onboard U.2 port (that’s probably connected to the chipset using 4x PCI-E 3.0 lanes and is shared with the M.2 port on the board that I don’t use either). Instead I use two PCI-E slot adapter cards that directly has the required U.2 SFF-8639 port and mounts the drive. LINK to that card: https://www.startech.com/HDD/Adapters/u2…ssd~PEX4SFF8639

Please note that these cards have no controller/raid/hba chip nor any other logic on them - but simply wire the PCI-E lanes to the U.2 drive.

These two cards are plugged into the two 16x PCI-E slots that come directly from the CPU (not the chipset!) - running in 8x/8x configuration since the socket 1151 CPUs only offer 16 PCI-E lanes directly off the CPU.

Anyway - the two Intel SSDs are detected in the BIOS as NVMe drives and are fully usable under Windows as single drives, BUT … and that’s what leads us to:

What problem I have:

They are not listed in the Intel RAID option ROM/UEFI module in the BIOS - so I can’t create a RAID 1 from these.

Okay, as I mentioned above - what I did in 2017 to an older system:

Supermicro X10SRi-F socket 2011-3 board using the Intel C612 chipset

Xeon E5-1650 v4 (Broadwell-E)

Two Samsung SM863 SATA SSDs and two Intel DC P3510 NVMe SSDs (these are 4x PCI-E cards directly for the PCI-E slots).

Windows Server 2012 R2 Standard

Same constellation - Raid 1 for the Samsung SATA SSDs for the OS and Raid 1 for the Intel NVMe SSDs for data.

Configured the SATA controller as RAID - created the RAID 1 for the Samsung SSDs in the BIOS and installed Windows 2012 R2 in UEFI mode on that RAID 1.

Once in Windows I downloaded and installed the package you also list here “Intel RSTe NVMe RAID Drivers & Software Set v4.6.0.2125” that includes the RSTe NVMe driver especially for Intel DC P3500/3600/3700 SSDs (so the P3510 is also supported). For the NVMe drives it uses the IaRNVMe.sys driver and it also installed the “Intel(R) RSTe Virtual Controller” controller.

In the “Intel(R) Rapid Storage Technology enterprise” GUI (the systray icon/program to launch) I could see all the drives connected to either the SATA Ports AND the NVMe drives. The SATA RAID 1 consisting of the two Samsung drives was there and I successfully created the RAID 1 using the two Intel NVMe drives.

On the new server I thought (since the hardware is younger) I could use the “Intel RSTe Drivers & Software Set v5.5.0.1367 WHQL for Win8-10” drivers since it seems like these are the successors to that v4.6.0 version mentioned above. They installed fine and updated the drivers for the SATA RAID controller and installed the GUI. Everything looks good besides that there are no NVMe drives shown in the GUI. Okay - there are no NVMe drivers in that package and since the two Intel DC P4510 drives are still using the standard Windows NVMe driver I thought I need the Intel driver for these drives to be recognized in the RSTe GUI software. So I installed the “Intel NVMe drivers v4.0.0.1007” that are compatible with these drives - it worked and now the standard Windows NVMe drivers are gone and it shows the Intel drives with that intel driver. The driver it uses is called IaNVMe.sys (so I guess not really similar to that IaRNVMe.sys [note the R in there] used in the older server). And the drives are still not shown in the RSTe GUI software to manage and maybe create an array.

Then I recalled the big cry out when Intel released it’s socket 2011-3 successor the socket 2066 with the X299 chipset and the server counterparts also using that socket or even the bigger socket 3647. Intel introduced the VROC (Virtual Raid on CPU) technology and along with that hardware (?) keys to be installed that enable certain features for that software that costs money. I remember that back in early 2017 when I built the old server it was only possible to RAID NVMe drives that are directly connected to the CPU if you use a Xeon and Intel NVMe SSDs. But they would not be bootable - what was fine with me. Now with the VROC technology it is possible to RAID NVMe drives even non-Intel ones and even boot from them - but you need to buy a key for that and I am not really sure what platforms besides the socket 2066 and 3647 are supported.

What I also tried on the new server:

“Intel RSTe NVMe RAID Drivers & Software Set v4.6.0.2125” that I used on the old server - but the Setup.exe says that my system doesn’t meet the requirements. Might be caused that these drivers support Windows 10 and Server 2012 R2 but do not list Server 2016. Might also be caused that I don’t have a P3500/3600/3700 SSD in the system but a P4510 that obviosly isn’t listed in the driver INF file. Might also be caused by the fact that I don’t yet run a Xeon in there - but an i3. Might also be caused by the fact that this is the smaller socket 1151v2 with a C246 chipset. The readme states that it requires a C600+/C220+ or C610 series controller.

A manual forced installation of the NVMe driver (IaRNVMe.sys) for the P4510 drive renders it unusable with a mark in device manager that the driver couldn’t be started or something.

Since you stated in your first post in this thread that QUOTE “All Intel RST(e) SATA RAID drivers from v14.8 series up do support NVMe RAID arrays as well. Supported are only modern Intel systems from 100-Series up, whose Intel SATA Controller has been set to RAID mode.” I downloaded and tried I think Intel RST(e) AHCI/RAID Drivers & Software Set v15.8.2.1009 WHQL. That installed fine but shows me only the SATA drives as the RSTe v5.5.0 above. So I guess your statement might only apply to NVMe drives that are connected to the chipset, not the CPU, am I guessing right?

Since there seems to be no updated IaRNVMe driver (the RSTe NVMe RAID Drivers & Software Set v4.6.0.2125 I am using for the old driver) I guess I am out of luck, right?

Any other ideas?

The VROC does not seem to be supported on that board either since I did not find a port to plug a possible 4 pin VROC key in and also there is no word about that in the Supermicro mainboard manual. I don’t really want to pay Intel for a feature that was once free.

A thing I might try is to use the software mirror option of Windows itself (not Storage Pool but the basic function in the disk management of windows). But I am sceptical on a possible performance loss and/or reliability of that feature.

@bucho:

Since I have no own experience with Intel C246 chipset mainboards, the creation of an Intel RST NVMe RAID1 array and the usage of Windows Server 2016 as OS, I may not be able to give you any serious advice.

Questions:

- For which tasks do you use your system?

- What were/are your reasons to install the OS onto the Intel RAID1 array consisting of 2 SATA SSDs?

I would have installed the OS onto one or both (as Intel RAID0 array) of the NVMe SSDs to get the maximum benefit from the NVMe data transfer protocol. - Which are the BIOS options regarding the Intel SATA Controllers and which of them did you choose?

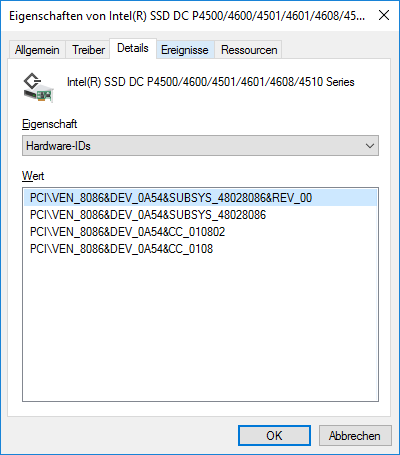

If possible, please post a screenshot of that BIOS page. - Which are the HardwareIDs of your currently in-use “Storage Controllers” and which specific drivers do they use?

Hello Fernando and thanks for your quick reply.

I know that probably no one here around has a similar setup but you surely have more insight of the Intel drivers than I do and maybe you can figure something out.

To answer your questions:

1. This will be a small server, running 24/7 and will have a database (Oracle), a few specialized programs like a data warehouse system and report generating system on it that access the database. There will be max. 3 (maybe some more in the future) simultaneous clients working on with these programms and some more clients that are always connected but will be simply inputting a few chunks of data into the database.

2. RAID1 simply because of fault tolerance if one of the SSDs dies or has some other problems so that the server keeps on running/working until maintenance where the system will be shut down and the faulty part will be replaced. So hot-swap, hot-spare should not be necessary.

And I am rather old school to have the OS and DATA on seperate drives to be able to create full backups of each and have the ability to roll back/switch data if necessary. The OS does not need to be that performant since it boots up once and only goes down for updates, maintenance and so on. The database and programs/program data should be on the faster NVMe drives since they will benefit from the high IOPS and also high transfer speeds (for backup/extraction/import purpose).

3. See here:

In the Advanced tab there are some options:

In the option “SATA and RST configuration” it looks like this:

I can enable/disable the controller and set it to AHCI or RAID. I can set the storage option ROM to Disable, Legacy or UEFI - I took UEFI.

Then there are just all SATA Ports listed and if there is a device detected which are just the two Samsung SATA SSDs.

So after I switched the SATA controller from AHCI to RAID the option “Intel RSTe SATA Controller” (as you can see in the Advanced tab) popped up (after a reboot). In there it looks like this:

There is my RAID1 of the two Samsung drives and I cannot do anything besides manage the existing array or create a new one, but as I mentioned the NVMe drives are not listed there, just the Samsung SATA drives.

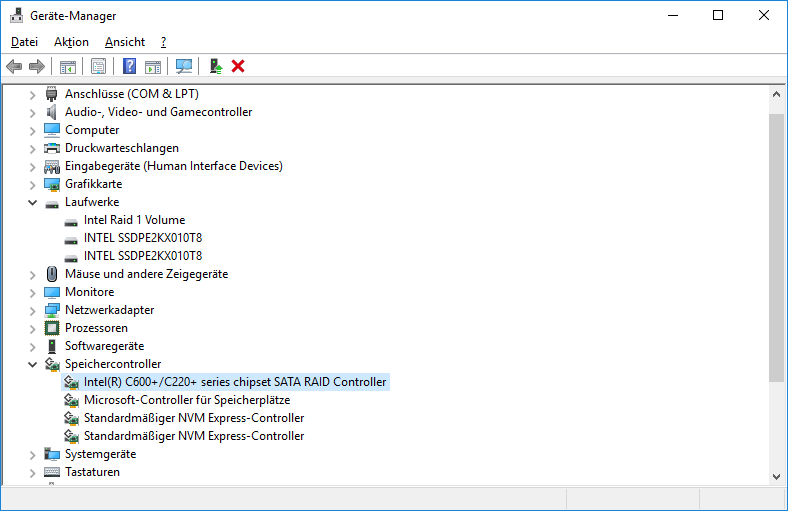

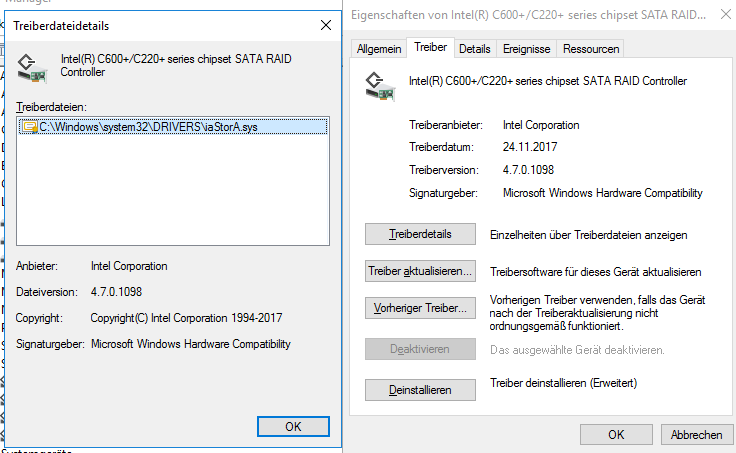

4. Here is the screenshot of the device manager - using the standard Microsoft drivers for the NVMe drives and the RSTe drivers 4.7.0.1117 for the SATA controller (as I wrote in my last post I already tried the RSTe 5.4.0.1453 too and the RST 15.7.7.1028 too but all show the same result, I might try the RSTe 5.5.0.1367 and the RST 16.7.1.1012 too).

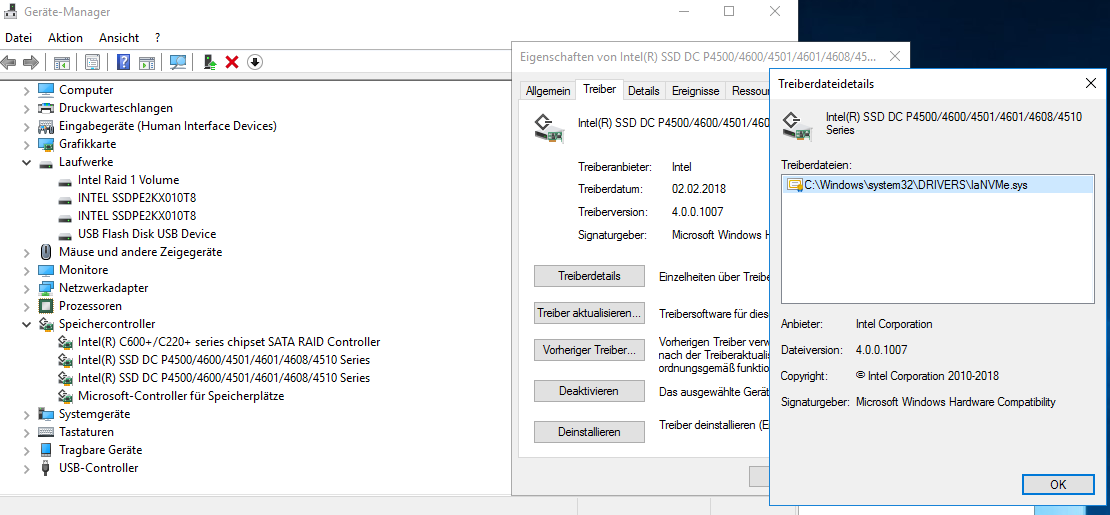

And here the device manager if I swith the driver of the NVMe devices from the Microsoft one to the Intel NVMe drivers 4.0.0.1007

So I am still thinking that if the NVMe drives were connected to PCI-E lanes coming from the chipset - a RAID might work. And if they are connected to the CPU (as they currently are) I would need a “virtual” controller to set up the RAID like the “Intel(R) RSTe Virtual Controller” I got installed on the old server (Xeon E5 Broadwell with C612 chipset using the Intel RSTe NVMe RAID Drivers & Software Set v4.6.0.2125) or this new VROC driver to also create a “Virtual Raid on CPU” - but I guess that my platform is not supported.

I can switch on of the Intel NVMe SSDs to the PCI-E 4x Slot in my board that is wired to the chipset and see if it shows up in the RSTe section in the BIOS or the Intel GUI tool in windows. I could use that slot and the U.2 port on my mainboard which are both wired to the chipset … but I am afraid that I might loose performance since the chipset is only connected via DMI 3.0 (that’s 4 x PCI-E 3.0 lanes) with the CPU hence memory.

@Bucho :

As you probably have already realized, I have moved our duscussion into a new thread. This way other interested users will have it easier to find it. Furthermore your main problem seems to be the creation of an Intel NVMe RAID1 array.

This is what I recommend to try:

1. Download the 64bit Intel RST driver v16.5.5.1040 WHQL from >here< and unzip it.

2. Run the Device Manager and update manually the NVMe driver of both listed “Standard NVM Express Controllers” by pointing to the file named “iaStorAC.inf”. You have to force the installation by using the “Have Disk” option. Choose the shown compatible device named “Intel(R) NVMe Controller”.

3. After the successful installation of the Intel NVMe driver v16.5.5.1040, enter the BIOS and look, whether you can create from within the Intel RSTe SATA RAID Utility the desired Intel RAID1 array consisting of the 2 NVMe SSDs.

Note: Make sure, that the SATA ports, where your 2 SATA SSDs are connected, do not use the same PCIe lanes as your NVMe SSDs. Look into your mainboard for details.

as far as i know (i don’t use vroc myself) VROC requires intel branded drives if you need to boot from them non intel drives can be raided but will not be bootable

for the intel RST raid 1 setup, as i recall you must install a os on one of the drives to be raided, then install the RST driver/GUI console software, reboot and then add the other drive as a raid-1 configuration this writes the raid code to the drives (but erases the disk information) requiring you to again install the OS, but this time the setup should pick up the two drives that you just wrote the array code to, note that this setup is not recommended for mission critical work as it’s a kludge to get the raid code on the drives you want to boot from this way

http://asrock.pc.cdn.bitgravity.com/Manu…age/English.pdf

i personally have not used the intel software myself as i have always used a real raid controller like the LSI 9270

Hello Fernando and thanks for the reply / the movement of my problem to a new thread.

Okay I downloaded and installed the 16.5.5 drivers. It automatically updated my NVMe controllers to that driver (iaStorAC.sys and IaStorAfs.sys and some exe and optane.dll) and they are now named “Intel(R) NVMe Controller”. The SATA RAID controller didn’t update and still had the driver that Windows came with - 13.2.0.1022 (IaStorAV.sys).

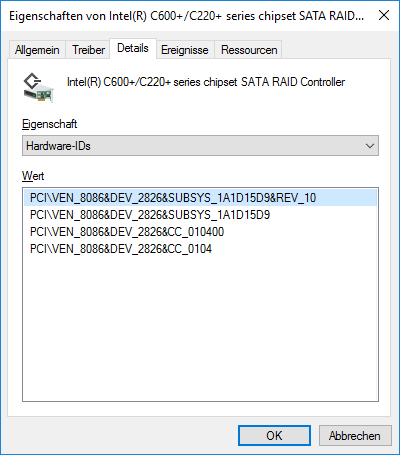

The Intel Tray Icon shows that everything is good (green) but when I try to open the RST GUI the program states that I need to update as the driver/program has new features and states that my driver is 13.2.0.1022 and the updated is 16.5.5.1040. I have to press X or OK and both options quit the program right away. The SATA RAID controller has the VEN_8086 and DEV_2826 as you can see in my screenshots above. There is no entry in the INF file - only for 2822 and some others.

So I manually (forced) to update the SATA controller to that driver (RST Premium) and it worked - rebooted and the GUI finally works.

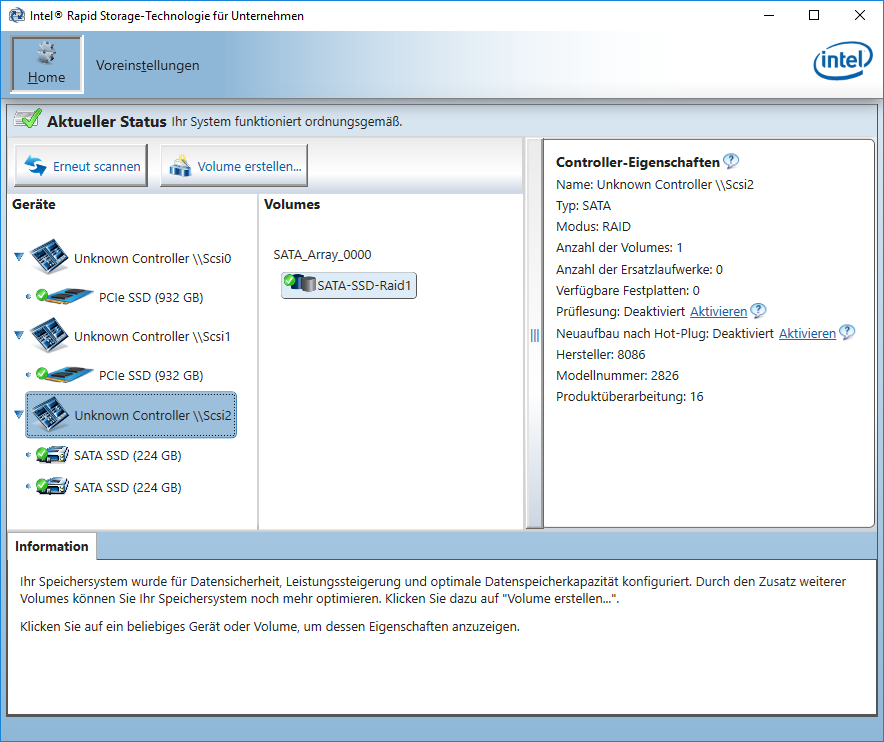

BUT - it still seems to have problems detecting my controllers / drives. Here is a screenshot:

It detects the RAID1 I already created in the BIOS/UEFI consisting of the two SATA SSDs. As you can see it has troubles detecting the SATA RAID controller and of course the NVMe controllers. At least the drives show up. The SATA RAID controller ist detected as “Unknown Controller \SCSI2” - but when I click on it it knows that it is SATA and RAID1 and even lets me activate/deactivate some things like hot-plug. Also the SATA RAID Array seems to be detected quite right as I am able to rename it or change it’s type or expand it.

The NVMe controllers are detected as “Unknown Controller \SCSI0 and 1” and when I click on them it says Type:unknown Mode:AHCI (which is wrong - should be NVMe) and so on. When I click on a PCIe SSD drive it shows correct infos like Type: PCIe SSD with correct size and sector size, model and serial number.

When I try to create a volume I can select the controller in the first step where I can choose between SATA and PCIe - but I can only select SATA since PCIe is greyed out.

I guess I will try to juggle with drivers once more and see if the RST GUI shows something else.

Funny that the RST driver 16.5.5 states in the Readme that the C246 chipset is supported, but when I switch it from AHCI to RAID (DEV_2826) it isn’t supported in the drivers.

I still think that if I connect the NVMe SSDs to the PCI-E lanes of the controller it might work somehow. And I think (since you stated that in you last sentence) that there should be no conflict between SATA ports and the PCI-E lanes the SSDs are currently on since they are directly connected to the CPU. It sure makes a difference if I connect them to lanes coming from the C246 chipset)

@DeeG

Thanks for your contribution.

As I said above - before Intel released the VROC technology it was limited to RAID NVMe SSDs and boot from them to Intel SSDs only. Now with VROC you can even RAID and boot from NVMe SSDs not branded by Intel - but you need a VROC key for that.

Since I don’t want to boot from them (but they are Intel SSDs anyway) I don’t really care about that option. I just want a RAID 1 out of the NVMe SSDs that are connected directly to the CPU since there is no bottleneck (DMI 3.0 <-> chipset) and maybe use the Intel RST driver/option ROM/EFI module since I think they know best how to handle RAID with their controllers and drives.

VROC doesn’t seem to be an option anyway since my Supermicro board has no connector for a VROC key.

The pdf RST procedure you posted the link for is a rather basic tutorial and seems to be very old (Windows 7 used). I don’t think that installing the OS to one of the NVMe SSDs will change anything since the drivers still won’t detect the controllers the right way.

Some years ago I also usually built RAID arrays with real hardware RAID controllers like LSI and Adaptec. But since SSDs evolved so fast some of the older RAID controllers limit SSDs or at least can’t handle them good enough and finally with NVMe SSDs hardware RAID controllers would cripple the performance even more. The only controller I could find when I quick checked is the HighPoint SSD7101A-1 that has four M.2 slots and PCI-E 16x. Would be an interesting comparison to see benchmarks of drives in there in RAID configuration vs these drives directly connected to a CPU using VROC or a similar RAID setup (like the older server I built as stated in my first post).

@Bucho :

Questions:

1. Which Intel RST Console Software do you currently use?

2. Does your BIOS offer the option to switch from RSTe (DEV_2626) to RST (DEV_2822) mode?

If not, you can try to install the “Universal 64bit Intel RST AHCI+RAID drivers v16.5.5.1040 mod+signed by Fernando”, which supports the DEV_2826 Intel SATA RAID Controller.

Don’t forget to import the Win-RAID CA Certificate (if not already done), before you start the installation.

the steps for creating a raid array on the RST console Has not changed since windows 7, the steps remain the same no matter which OS is being used

you should not mix and match RST versions, your supermicro board uses a different chipset than consumer boards do and as such the consumer RST packages will not have support for your workstation chipset. you should uninstall the current RST drivers, and install the listed eRST driver from the supermicro site for your model board

last, you have a unknown device (missing drivers), this needs to be resolved by again installing the supermicro drivers for your model board

just to recap, workstations use cxxx chipsets that are NOT SUPPORTED by the consumer RST driver package, you must install the enterprise version of the RST which DOES HAVE SUPPORT for the cxxx chipsets

Hello Fernando,

1. I use the v16.5.5.1040 that came with the driver.

2. No - as you can see in my second post I am just able to switch between AHCI and RAID. After I pick RAID and reboot there is one more option in the Advanced tab of the BIOS which is the RSTe SATA controller where I can just create and modify RAID arrays.

I tried your modded driver but nothing changed in the GUI console software. It still says "Unknown Controller".

So although the 16.5.5 driver works with the controller, the GUI console software does not fully work with the RST controller (DEV_2826) and/or the new C246 chipset. Do you think I should try the 16.7.0 drivers and GUI?

@DeeG

The basic steps for creating a SATA (!) array might not drastically have changed, but since Win7 the hardware changed quite a bit. (Legacy) BIOS -> UEFI, PCI-E NVMe based storage that can be connected directly to the CPU, not only the PCI-E lanes of the chipset and so on. With UEFI the "BIOS" method for creating an array changed since the Intel RST option ROM or EFI module looks different and usually are accessed through the BIOS/UEFI and not by pressing Ctrl + I.

I am aware that there are different types of RST drivers and software as I have some experience with computers since the 386 and used these drivers (sometimes modded) on desktops, workstations, servers and laptops. A few years back it was rather clear what type/version to use for what system, but it seems like Intel wants to split the classic desktop consumers and at least the server/workstation (and HEDT) segment further apart. That VROC is a clear step in that direction. Right now in my case it is very unclear what to use.

The current "consumer RST package" list my chipset - see here:

https://downloadcenter.intel.com/downloa…face-and-Driver

So if I install the driver/software from the supermicro site I will get the version 4.7.0.1099 that does not have any drivers for any NVMe drives/controller. Great thing for a board that has two M.2 (PCI-E 3.0 4x) and a U.2 (PCI-E 3.0 4x) expansion options. So the logical "next" driver version should be the 5.x right? But these drivers also have no NVMe drivers except the 5.4.0 VROC package drivers. But these don’t seem to work on C2xx Chipsets, just on the C620 and C422 series (Xeon Scalable how Intel calls them) oh and the Xeon D-2100 series.

And @DeeG the "unknown device" isn’t a device in the Windows device manager, but just shows in the Intel RST GUI console software. In device manager all drivers are installed and seem to work.

UPDATE:

Okay I played around some more … if I plug one of the Intel PCI-E SSDs into the 4x Slot of the board (that has PCI-E lanes connected to the chipset rather than the CPU) a new option pops up in the "SATA and RST Configuration Menu" in the BIOS (see second screenshot in post #3). There now is an additional line that lists the PCI-E 4x Slot and is called "PCI-E M.2-M1" (the "M1" M.2 socket is shared with that PCI-E 4x slot and the second M2 is shared with the onboard U.2, all coming from the chipset).

There I can chose between "Not RST Controlled" and "RST Controlled". If I leave it on "Not RST Controlled" (default) it shows up just like when I plug it into the PCI-16x slot of the CPU.

If I switch to "RST Controlled" the SSD is gone - both in Windows and in the BIOS. So my guess is that this option only is relevant if I use a M.2 that still uses the SATA AHCI protocol instead of the NVMe protocol.

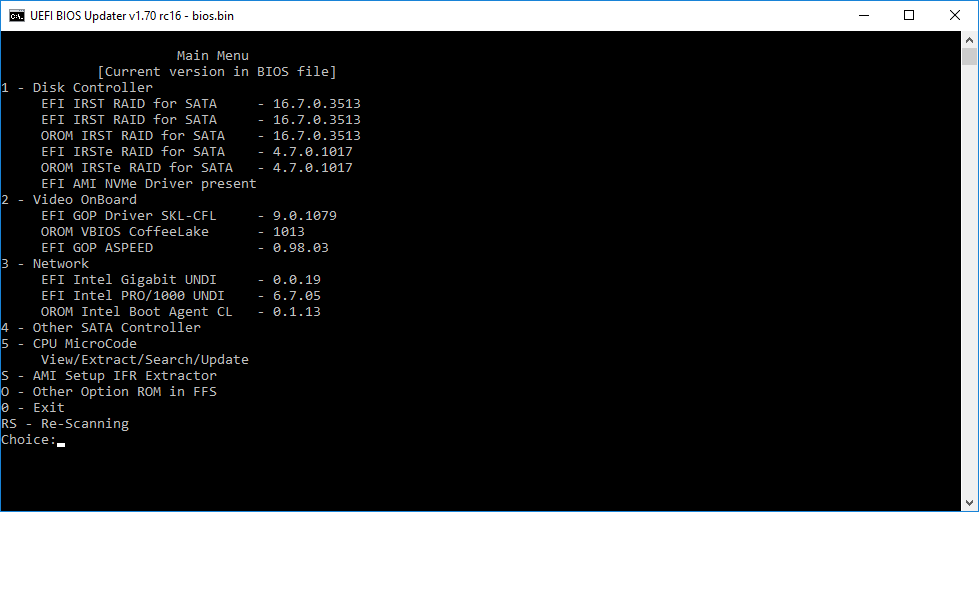

Next thing I did, since I have some experience in BIOS modding, I used the latest UBU to check what’s in the BIOS. Here:

So as you can see there are some option ROMs and EFI modules, the IRSTe 4.7.0.1017 are currently used. But why are the regular "consumer" 16.7.0.3513 also there if they are not used? Or are they used if I switch to AHCI, I guess not, right? Fernando any ideas?

A post was split to a new topic: [Win7] Compatibility of Intel C246 Chipsets